Contents

Unifying Framework for Deep Learning on Non-Euclidean Domains

Spectral graph convolutions proposed

Joan Bruna et al.

2014

ChebNet: efficient spectral filtering on graphs

Defferrard, Bresson, Vandergheynst

2016

Group Equivariant CNNs

Cohen and Welling

2016

Graph Convolutional Networks (GCNs)

Kipf and Welling

2017

Term 'geometric deep learning' coined

Bronstein et al., IEEE Signal Processing Magazine

2017

Neural Message Passing framework

Gilmer et al.

2017

AlphaFold2 wins CASP14

DeepMind

2020

Graphormer wins OGB-LSC at KDD Cup

Ying et al., Microsoft

2021

5G proto-book published

Bronstein, Bruna, Cohen, Velickovic

2021

GPS Graph Transformer framework

Rampasek et al.

2022

GraphCast outperforms ECMWF weather forecasting

Lam et al., DeepMind

2023

GNoME discovers 2.2M new crystal structures

Merchant et al., DeepMind

2023

Geometric deep learning (GDL) is a class of deep learning techniques that generalize neural network architectures from Euclidean-structured data — images, text, audio — to non-Euclidean domains such as graphs, manifolds, and point clouds.1 The field connects successful deep learning architectures to the symmetries of their input domains using group theory and differential geometry, providing both a unifying mathematical framework for existing models and a constructive method for designing new ones.2

Convolutional neural networks (CNNs) exploit translation symmetry: a filter that detects an edge works equally well at any position in an image, so weights can be shared across all positions.1 Recurrent neural networks similarly exploit the sequential structure of chains. But social networks, molecular structures, protein interaction maps, 3D meshes, and the surface of the Earth are not grids — they are graphs or curved surfaces.3 Forcing this data into grid representations introduces distortions and discards structural information.1

Geometric deep learning starts from the question: what properties make CNNs effective on grids, and how can those properties be preserved on non-Euclidean domains?2

Felix Klein's 1872 Erlangen Program classified geometries by their symmetry groups.2 Bronstein, Bruna, Cohen, and Velickovic applied this idea to deep learning in their 2021 proto-book "Geometric Deep Learning: Grids, Groups, Graphs, Geodesics, and Gauges." They showed that CNNs, graph neural networks (GNNs), recurrent neural networks, and Transformers are all instances of a single design principle: learning functions that respect the symmetries of their domain.2

The central concept is equivariance. A layer is equivariant to a group of transformations if transforming the input produces a correspondingly transformed output.4 For a CNN, the group is translations on the pixel grid. For a GNN, it is permutations of nodes. For data on a sphere, it is the rotation group SO(3).24

This yields a taxonomy of architectures organized by domain (grids, groups, graphs, geodesics, gauges) and the symmetry group each architecture respects.2

The roots of geometric deep learning lie in spectral graph theory. In 2014, Joan Bruna and colleagues defined graph convolutions using filters in the Fourier domain of the graph Laplacian — the first principled generalization of CNNs to irregular graphs.5 These spectral methods were computationally expensive and did not transfer between graphs with different structures.6

Two developments in 2016-2017 made graph neural networks practical. Defferrard, Bresson, and Vandergheynst introduced ChebNet, which approximated spectral filters with Chebyshev polynomials, making graph convolutions efficient and spatially localized.6 Kipf and Welling simplified this further with Graph Convolutional Networks (GCNs): a first-order approximation of spectral graph convolutions that achieved state-of-the-art results on semi-supervised node classification benchmarks.11

The term "geometric deep learning" was introduced by Michael Bronstein and collaborators in a 2017 IEEE Signal Processing Magazine review surveying generalizations of deep learning to non-Euclidean domains.3 In the same period, Monti et al. proposed mixture model CNNs (MoNet) as a unified framework for graph and manifold convolutions,7 and Cohen and Welling introduced group equivariant CNNs, extending translation equivariance to discrete rotation and reflection groups.8

The 2021 proto-book by Bronstein, Bruna, Cohen, and Velickovic consolidated these threads into a single geometric framework connecting grids, groups, graphs, geodesics, and gauges.2

A symmetry is a transformation that preserves domain structure. Translations preserve pixel grids; node permutations preserving edges are graph symmetries; rotations are symmetries of the sphere.2 Networks that encode these symmetries avoid redundant computation and require fewer training examples.4

Invariance means the output does not change under transformation: f(Tx) = f(x). Graph-level classification, for example, should be invariant to node relabeling.4 Equivariance means the output transforms correspondingly: f(Tx) = T'f(x). Predicting atomic force vectors on a rotated molecule should produce correspondingly rotated vectors.2

Gilmer et al. formalized the message passing framework in 2017.9 In each layer of a message passing neural network (MPNN), every node aggregates features from its neighbors using a permutation-invariant function (sum, mean, or max), then updates its own representation. After k layers, each node's representation encodes its k-hop neighborhood.9

Graph Convolutional Networks (GCNs) are a specific case. A GCN layer multiplies node features by the symmetrically normalized adjacency matrix, applies a learned weight matrix, and passes the result through a nonlinearity.11 Kipf and Welling observed that this operation is a differentiable version of the Weisfeiler-Lehman graph isomorphism test: even with random weights, a 3-layer GCN on Zachary's karate club network produces embeddings that reflect community structure.5

Graph Attention Networks (GATs) replace fixed normalization with learned attention weights per neighbor.9

Geometric deep learning also addresses equivariance to continuous symmetry groups. SE(3)-equivariant networks produce outputs that transform correctly under 3D rotations and translations — a requirement for molecular and physical simulations.4

Constructing equivariant layers uses representation theory: an equivariant linear map between feature spaces is an intertwiner between representations of the symmetry group G.4 For the rotation group SO(3), this involves constraining convolution kernels using spherical harmonics and Wigner matrices.4

On curved surfaces and general manifolds, there is no global coordinate system, so feature vectors at different points cannot be directly compared.4 Gauge equivariant neural networks solve this by defining convolutions on fiber bundles using connections and parallel transport from differential geometry. The network's output is then independent of arbitrary local coordinate choices.24

The geometric framework reveals that several well-known architectures share common structure.2

CNNs are group convolutions over the translation group on a grid.2 GNNs are permutation-equivariant message passing networks on graphs.2 Transformers operate on fully connected graphs — self-attention is message passing where every token attends to every other token, with edge weights computed dynamically.2 Recurrent neural networks process sequences, which are chains (a graph with linear connectivity) with causal ordering.2 Spherical CNNs are SO(3)-equivariant networks on the sphere, using spherical harmonics as a Fourier basis.4

Standard message passing networks are limited to local neighborhoods: each layer aggregates information from immediate neighbors, so capturing long-range dependencies requires stacking many layers, which leads to over-smoothing.9 Graph Transformers address this by applying global self-attention over all nodes, allowing every node to attend to every other node in a single layer.12

Graphormer, introduced by Ying et al. at Microsoft in 2021, encoded graph structure into a standard Transformer via three additions: centrality encoding (node degree as bias), spatial encoding (shortest-path distances as attention bias), and edge encoding (aggregating edge features along shortest paths).12 It won the OGB Large-Scale Challenge at KDD Cup 2021, outperforming all GNN submissions on the PCQM4M molecular property prediction benchmark.12

The GPS (General, Powerful, Scalable) framework by Rampasek et al. at NeurIPS 2022 proposed a modular recipe combining three ingredients: positional/structural encodings (such as random walk or Laplacian eigenvector encodings), a local message passing layer, and a global attention layer.13 Each GPS layer runs the local and global components in parallel and sums their outputs, achieving linear complexity through efficient attention approximations while retaining the inductive biases of message passing.13

These architectures address the expressivity bottleneck of standard MPNNs — which cannot distinguish graphs beyond the 1-WL isomorphism test — by incorporating global structural information directly into the attention mechanism.1213

AlphaFold2, developed by DeepMind, predicts 3D protein structures from amino acid sequences with atomic-level accuracy.10 It processes protein data as a spatial graph with equivariant attention mechanisms respecting the SE(3) symmetry of 3D space.10 AlphaFold2 won CASP14 in 2020 with a median GDT score of 92.4. Its structure database has been expanded to over 200 million predicted proteins.10

Molecules are naturally represented as graphs with atoms as nodes and bonds as edges.9 GNNs predict properties such as toxicity, solubility, and binding affinity directly from molecular graphs without hand-crafted descriptors.9 SchNet and DimeNet extend this to 3D molecular geometry using continuous-filter convolutions that incorporate interatomic distances and bond angles.4

Data from the Large Hadron Collider — jets of particles, detector hits, interaction patterns — is structured as point clouds and graphs. GNNs have been applied to jet classification, particle tracking, and event reconstruction at CERN.2

MeshCNN applies convolutions on mesh edges. PointNet processes raw point clouds with architectures invariant to point permutations.3 These models perform shape classification, segmentation, and correspondence on 3D geometry data.3

PinSage, deployed at Pinterest, frames collaborative filtering as link prediction on a bipartite user-item graph, propagating information through billions of nodes.2

GraphCast, developed by DeepMind and published in Science in 2023, models the Earth's atmosphere as a multi-mesh graph and uses a GNN-based encoder-processor-decoder architecture to predict hundreds of weather variables at 0.25° resolution globally.14 Trained on 39 years of ERA5 reanalysis data, GraphCast produces 10-day forecasts in under one minute on a single TPU, compared to hours of supercomputer time for traditional numerical weather prediction.14 It outperformed ECMWF's HRES operational system on 90% of 1,380 verification targets, including better prediction of tropical cyclone tracks, atmospheric rivers, and extreme temperature events.14

Graph Networks for Materials Exploration (GNoME), published in Nature in 2023 by Merchant et al. at DeepMind, used GNNs to predict the stability of inorganic crystal structures.15 GNoME represented crystals as graphs with atoms as nodes and bonds as edges, then iteratively trained on available DFT-computed data and used the model to filter candidates for further computation.15 The system discovered 2.2 million new crystal structures, of which 380,000 were predicted to be thermodynamically stable — an order of magnitude increase over all previously known stable crystals in the Materials Project database.15

Spherical CNNs process atmospheric and climate data on the Earth's surface using SO(3)-equivariant convolutions, avoiding distortions from map projections.4

In deep GNNs, the over-smoothing problem causes node representations to converge to indistinguishable states as layers increase, limiting effective depth.5 The expressivity of message passing networks is bounded by the Weisfeiler-Lehman hierarchy: standard MPNNs cannot distinguish certain non-isomorphic graphs that the 1-WL test also fails on.9 Graph Transformers partially address this by incorporating global attention, though at higher computational cost.1213 Scalability to graphs with billions of nodes requires subgraph sampling and distributed computation.5 The theoretical relationship between equivariance and generalization — early results show equivariant networks are provably more sample-efficient — is an active area of research.4

Geometric deep learning (GDL) is a class of deep learning techniques that generalize neural network architectures from Euclidean-structured data — images, text, audio — to non-Euclidean domains such as graphs, manifolds, and point clouds.1 The field connects successful deep learning architectures to the symmetries of their input domains using group theory and differential geometry, providing both a unifying mathematical framework for existing models and a constructive method for designing new ones.2

Spectral graph convolutions proposed

Joan Bruna et al.

2014

ChebNet: efficient spectral filtering on graphs

Defferrard, Bresson, Vandergheynst

2016

Group Equivariant CNNs

Cohen and Welling

2016

Graph Convolutional Networks (GCNs)

Kipf and Welling

2017

Term 'geometric deep learning' coined

Bronstein et al., IEEE Signal Processing Magazine

2017

Neural Message Passing framework

Gilmer et al.

2017

AlphaFold2 wins CASP14

DeepMind

2020

Graphormer wins OGB-LSC at KDD Cup

Ying et al., Microsoft

2021

5G proto-book published

Bronstein, Bruna, Cohen, Velickovic

2021

GPS Graph Transformer framework

Rampasek et al.

2022

GraphCast outperforms ECMWF weather forecasting

Lam et al., DeepMind

2023

GNoME discovers 2.2M new crystal structures

Merchant et al., DeepMind

2023

Convolutional neural networks (CNNs) exploit translation symmetry: a filter that detects an edge works equally well at any position in an image, so weights can be shared across all positions.1 Recurrent neural networks similarly exploit the sequential structure of chains. But social networks, molecular structures, protein interaction maps, 3D meshes, and the surface of the Earth are not grids — they are graphs or curved surfaces.3 Forcing this data into grid representations introduces distortions and discards structural information.1

Geometric deep learning starts from the question: what properties make CNNs effective on grids, and how can those properties be preserved on non-Euclidean domains?2

Felix Klein's 1872 Erlangen Program classified geometries by their symmetry groups.2 Bronstein, Bruna, Cohen, and Velickovic applied this idea to deep learning in their 2021 proto-book "Geometric Deep Learning: Grids, Groups, Graphs, Geodesics, and Gauges." They showed that CNNs, graph neural networks (GNNs), recurrent neural networks, and Transformers are all instances of a single design principle: learning functions that respect the symmetries of their domain.2

The central concept is equivariance. A layer is equivariant to a group of transformations if transforming the input produces a correspondingly transformed output.4 For a CNN, the group is translations on the pixel grid. For a GNN, it is permutations of nodes. For data on a sphere, it is the rotation group SO(3).24

This yields a taxonomy of architectures organized by domain (grids, groups, graphs, geodesics, gauges) and the symmetry group each architecture respects.2

The roots of geometric deep learning lie in spectral graph theory. In 2014, Joan Bruna and colleagues defined graph convolutions using filters in the Fourier domain of the graph Laplacian — the first principled generalization of CNNs to irregular graphs.5 These spectral methods were computationally expensive and did not transfer between graphs with different structures.6

Two developments in 2016-2017 made graph neural networks practical. Defferrard, Bresson, and Vandergheynst introduced ChebNet, which approximated spectral filters with Chebyshev polynomials, making graph convolutions efficient and spatially localized.6 Kipf and Welling simplified this further with Graph Convolutional Networks (GCNs): a first-order approximation of spectral graph convolutions that achieved state-of-the-art results on semi-supervised node classification benchmarks.11

The term "geometric deep learning" was introduced by Michael Bronstein and collaborators in a 2017 IEEE Signal Processing Magazine review surveying generalizations of deep learning to non-Euclidean domains.3 In the same period, Monti et al. proposed mixture model CNNs (MoNet) as a unified framework for graph and manifold convolutions,7 and Cohen and Welling introduced group equivariant CNNs, extending translation equivariance to discrete rotation and reflection groups.8

The 2021 proto-book by Bronstein, Bruna, Cohen, and Velickovic consolidated these threads into a single geometric framework connecting grids, groups, graphs, geodesics, and gauges.2

A symmetry is a transformation that preserves domain structure. Translations preserve pixel grids; node permutations preserving edges are graph symmetries; rotations are symmetries of the sphere.2 Networks that encode these symmetries avoid redundant computation and require fewer training examples.4

Invariance means the output does not change under transformation: f(Tx) = f(x). Graph-level classification, for example, should be invariant to node relabeling.4 Equivariance means the output transforms correspondingly: f(Tx) = T'f(x). Predicting atomic force vectors on a rotated molecule should produce correspondingly rotated vectors.2

Gilmer et al. formalized the message passing framework in 2017.9 In each layer of a message passing neural network (MPNN), every node aggregates features from its neighbors using a permutation-invariant function (sum, mean, or max), then updates its own representation. After k layers, each node's representation encodes its k-hop neighborhood.9

Graph Convolutional Networks (GCNs) are a specific case. A GCN layer multiplies node features by the symmetrically normalized adjacency matrix, applies a learned weight matrix, and passes the result through a nonlinearity.11 Kipf and Welling observed that this operation is a differentiable version of the Weisfeiler-Lehman graph isomorphism test: even with random weights, a 3-layer GCN on Zachary's karate club network produces embeddings that reflect community structure.5

Graph Attention Networks (GATs) replace fixed normalization with learned attention weights per neighbor.9

Geometric deep learning also addresses equivariance to continuous symmetry groups. SE(3)-equivariant networks produce outputs that transform correctly under 3D rotations and translations — a requirement for molecular and physical simulations.4

Constructing equivariant layers uses representation theory: an equivariant linear map between feature spaces is an intertwiner between representations of the symmetry group G.4 For the rotation group SO(3), this involves constraining convolution kernels using spherical harmonics and Wigner matrices.4

On curved surfaces and general manifolds, there is no global coordinate system, so feature vectors at different points cannot be directly compared.4 Gauge equivariant neural networks solve this by defining convolutions on fiber bundles using connections and parallel transport from differential geometry. The network's output is then independent of arbitrary local coordinate choices.24

The geometric framework reveals that several well-known architectures share common structure.2

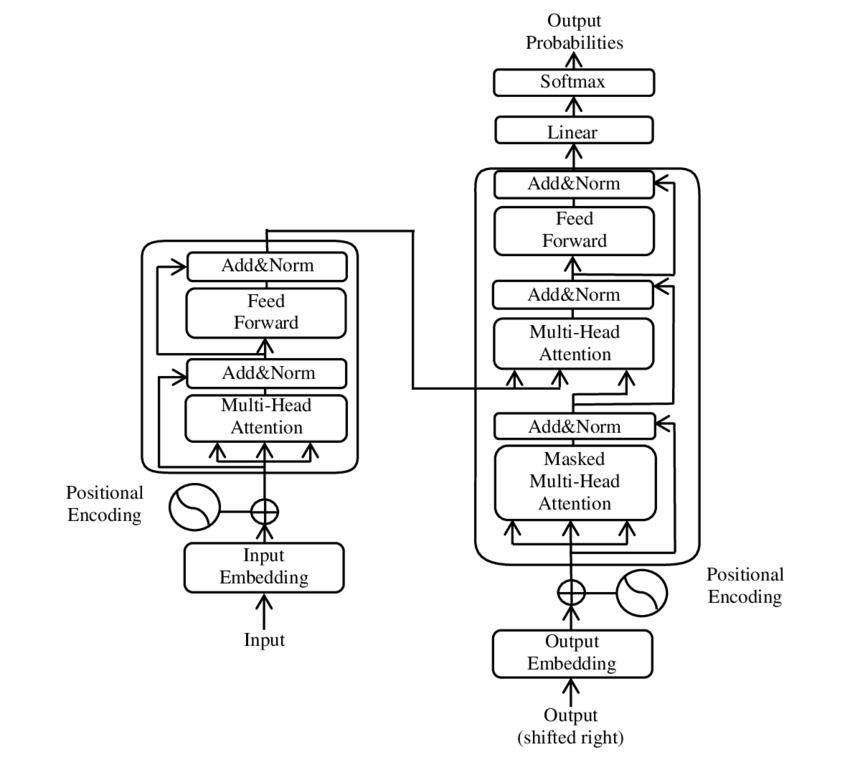

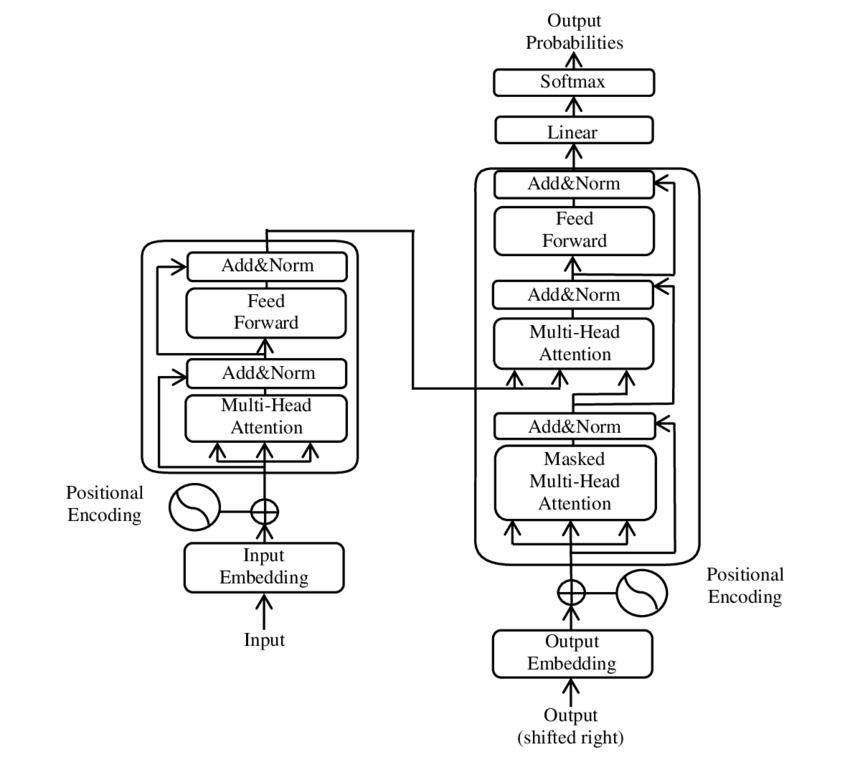

CNNs are group convolutions over the translation group on a grid.2 GNNs are permutation-equivariant message passing networks on graphs.2 Transformers operate on fully connected graphs — self-attention is message passing where every token attends to every other token, with edge weights computed dynamically.2 Recurrent neural networks process sequences, which are chains (a graph with linear connectivity) with causal ordering.2 Spherical CNNs are SO(3)-equivariant networks on the sphere, using spherical harmonics as a Fourier basis.4

Standard message passing networks are limited to local neighborhoods: each layer aggregates information from immediate neighbors, so capturing long-range dependencies requires stacking many layers, which leads to over-smoothing.9 Graph Transformers address this by applying global self-attention over all nodes, allowing every node to attend to every other node in a single layer.12

Graphormer, introduced by Ying et al. at Microsoft in 2021, encoded graph structure into a standard Transformer via three additions: centrality encoding (node degree as bias), spatial encoding (shortest-path distances as attention bias), and edge encoding (aggregating edge features along shortest paths).12 It won the OGB Large-Scale Challenge at KDD Cup 2021, outperforming all GNN submissions on the PCQM4M molecular property prediction benchmark.12

The GPS (General, Powerful, Scalable) framework by Rampasek et al. at NeurIPS 2022 proposed a modular recipe combining three ingredients: positional/structural encodings (such as random walk or Laplacian eigenvector encodings), a local message passing layer, and a global attention layer.13 Each GPS layer runs the local and global components in parallel and sums their outputs, achieving linear complexity through efficient attention approximations while retaining the inductive biases of message passing.13

These architectures address the expressivity bottleneck of standard MPNNs — which cannot distinguish graphs beyond the 1-WL isomorphism test — by incorporating global structural information directly into the attention mechanism.1213

AlphaFold2, developed by DeepMind, predicts 3D protein structures from amino acid sequences with atomic-level accuracy.10 It processes protein data as a spatial graph with equivariant attention mechanisms respecting the SE(3) symmetry of 3D space.10 AlphaFold2 won CASP14 in 2020 with a median GDT score of 92.4. Its structure database has been expanded to over 200 million predicted proteins.10

Molecules are naturally represented as graphs with atoms as nodes and bonds as edges.9 GNNs predict properties such as toxicity, solubility, and binding affinity directly from molecular graphs without hand-crafted descriptors.9 SchNet and DimeNet extend this to 3D molecular geometry using continuous-filter convolutions that incorporate interatomic distances and bond angles.4

Data from the Large Hadron Collider — jets of particles, detector hits, interaction patterns — is structured as point clouds and graphs. GNNs have been applied to jet classification, particle tracking, and event reconstruction at CERN.2

MeshCNN applies convolutions on mesh edges. PointNet processes raw point clouds with architectures invariant to point permutations.3 These models perform shape classification, segmentation, and correspondence on 3D geometry data.3

PinSage, deployed at Pinterest, frames collaborative filtering as link prediction on a bipartite user-item graph, propagating information through billions of nodes.2

GraphCast, developed by DeepMind and published in Science in 2023, models the Earth's atmosphere as a multi-mesh graph and uses a GNN-based encoder-processor-decoder architecture to predict hundreds of weather variables at 0.25° resolution globally.14 Trained on 39 years of ERA5 reanalysis data, GraphCast produces 10-day forecasts in under one minute on a single TPU, compared to hours of supercomputer time for traditional numerical weather prediction.14 It outperformed ECMWF's HRES operational system on 90% of 1,380 verification targets, including better prediction of tropical cyclone tracks, atmospheric rivers, and extreme temperature events.14

Graph Networks for Materials Exploration (GNoME), published in Nature in 2023 by Merchant et al. at DeepMind, used GNNs to predict the stability of inorganic crystal structures.15 GNoME represented crystals as graphs with atoms as nodes and bonds as edges, then iteratively trained on available DFT-computed data and used the model to filter candidates for further computation.15 The system discovered 2.2 million new crystal structures, of which 380,000 were predicted to be thermodynamically stable — an order of magnitude increase over all previously known stable crystals in the Materials Project database.15

Spherical CNNs process atmospheric and climate data on the Earth's surface using SO(3)-equivariant convolutions, avoiding distortions from map projections.4

In deep GNNs, the over-smoothing problem causes node representations to converge to indistinguishable states as layers increase, limiting effective depth.5 The expressivity of message passing networks is bounded by the Weisfeiler-Lehman hierarchy: standard MPNNs cannot distinguish certain non-isomorphic graphs that the 1-WL test also fails on.9 Graph Transformers partially address this by incorporating global attention, though at higher computational cost.1213 Scalability to graphs with billions of nodes requires subgraph sampling and distributed computation.5 The theoretical relationship between equivariance and generalization — early results show equivariant networks are provably more sample-efficient — is an active area of research.4